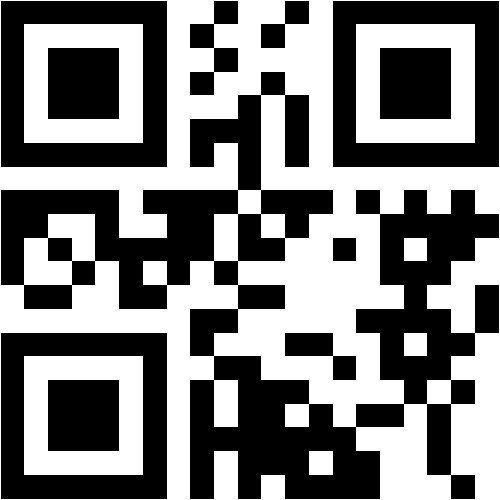

Luis M. de Campos

@decsai.ugr.es

Computer Science and Artificial Intelligence

University of Granada

RESEARCH, TEACHING, or OTHER INTERESTS

Artificial Intelligence, Information Systems

Scopus Publications

Scholar Citations

Scholar h-index

Scholar i10-index

Scopus Publications

Luis M. de Campos, Juan M. Fernández-Luna, Juan F. Huete, Francisco J. Ribadas-Pena, and Néstor Bolaños

MDPI AG

In the context of academic expert finding, this paper investigates and compares the performance of information retrieval (IR) and machine learning (ML) methods, including deep learning, to approach the problem of identifying academic figures who are experts in different domains when a potential user requests their expertise. IR-based methods construct multifaceted textual profiles for each expert by clustering information from their scientific publications. Several methods fully tailored for this problem are presented in this paper. In contrast, ML-based methods treat expert finding as a classification task, training automatic text classifiers using publications authored by experts. By comparing these approaches, we contribute to a deeper understanding of academic-expert-finding techniques and their applicability in knowledge discovery. These methods are tested with two large datasets from the biomedical field: PMSC-UGR and CORD-19. The results show how IR techniques were, in general, more robust with both datasets and more suitable than the ML-based ones, with some exceptions showing good performance.

Luis M. de Campos, Juan M. Fernández-Luna, and Juan F. Huete

Springer Science and Business Media LLC

AbstractIn the context of content-based recommender systems, the aim of this paper is to determine how better profiles can be built and how these affect the recommendation process based on the incorporation of temporality, i.e. the inclusion of time in the recommendation process, and topicality, i.e. the representation of texts associated with users and items using topics and their combination. To that end, we build both topically and temporally homogeneous subprofiles to represent items. The main contribution of the paper is to present two different ways of hybridising these two dimensions and to evaluate and compare them with other alternatives. Our proposals and experiments are carried out in the specific context of publication venue recommendation.

Marlon Santiago Viñán-Ludeña and Luis M. de Campos

Emerald

Purpose The main purpose of this paper is to analyze a tourist destination using sentiment analysis techniques with data from Twitter and Instagram to find the most representative entities (or places) and perceptions (or aspects) of the users. Design/methodology/approach The authors used 90,725 Instagram posts and 235,755 Twitter tweets to analyze tourism in Granada (Spain) to identify the important places and perceptions mentioned by travelers on both social media sites. The authors used several approaches for sentiment classification for English and Spanish texts, including deep learning models. Findings The best results in a test set were obtained using a bidirectional encoder representations from transformers (BERT) model for Spanish texts and Tweeteval for English texts, and these were subsequently used to analyze the data sets. It was then possible to identify the most important entities and aspects, and this, in turn, provided interesting insights for researchers, practitioners, travelers and tourism managers so that services could be improved and better marketing strategies formulated. Research limitations/implications The authors propose a Spanish-Tourism-BERT model for performing sentiment classification together with a process to find places through hashtags and to reveal the important negative aspects of each place. Practical implications The study enables managers and practitioners to implement the Spanish-BERT model with our Spanish Tourism data set that the authors released for adoption in applications to find both positive and negative perceptions. Originality/value This study presents a novel approach on how to apply sentiment analysis in the tourism domain. First, the way to evaluate the different existing models and tools is presented; second, a model is trained using BERT (deep learning model); third, an approach of how to identify the acceptance of the places of a destination through hashtags is presented and, finally, the evaluation of why the users express positivity (negativity) through the identification of entities and aspects.

Marlon Santiago Viñán-Ludeña and Luis M. de Campos

Emerald

PurposeThe main aim of this paper is to build an approach to analyze the tourist content posted on social media. The approach incorporates information extraction, cleaning, data processing, descriptive and content analysis and can be used on different social media platforms such as Instagram, Facebook, etc. This work proposes an approach to social media analytics in traveler-generated content (TGC), and the authors use Twitter to apply this study and examine data about the city and the province of Granada.Design/methodology/approachIn order to identify what people are talking and posting on social media about places, events, restaurants, hotels, etc. the authors propose the following approach for data collection, cleaning and data analysis. The authors first identify the main keywords for the place of study. A descriptive analysis is subsequently performed, and this includes post metrics with geo-tagged analysis and user metrics, retweets and likes, comments, videos, photos and followers. The text is then cleaned. Finally, content analysis is conducted, and this includes word frequency calculation, sentiment and emotion detection and word clouds. Topic modeling was also performed with latent Dirichlet association (LDA).FindingsThe authors used the framework to collect 262,859 tweets about Granada. The most important hashtags are #Alhambra and #SierraNevada, and the most prolific user is @AlhambraCultura. The approach uses a seasonal context, and the posted tweets are divided into two periods (spring–summer and autumn–winter). Word frequency was calculated and again Granada, Alhambra are the most frequent words in both periods in English and Spanish. The topic models show the subjects that are mentioned in both languages, and although there are certain small differences in terms of language and season, the Alhambra, Sierra Nevada and gastronomy stand out as the most important topics.Research limitations/implicationsExtremely difficult to identify sarcasm, posts may be ambiguous, users may use both Spanish and English words in their tweets and tweets may contain spelling mistakes, colloquialisms or even abbreviations. Multilingualism represents also an important limitation since it is not clear how tweets written in different languages should be processed. The size of the data set is also an important factor since the greater the amount of data, the better the results. One of the largest limitations is the small number of geo-tagged tweets as geo-tagging would provide information about the place where the tweet was posted and opinions of it.Originality/valueThis study proposes an interesting way to analyze social media data, bridging tourism and social media literature in the data analysis context and contributes to discover patterns and features of the tourism destination through social media. The approach used provides the prospective traveler with an overview of the most popular places and the major posters for a particular tourist destination. From a business perspective, it informs managers of the most influential users, and the information obtained can be extremely useful for managing their tourism products in that region.

Luis M. De Campos, Juan M. Fernandez-Luna, and Juan F. Huete

Institute of Electrical and Electronics Engineers (IEEE)

In this paper, we study the venue recommendation problem in order to help researchers identify a journal or conference to submit a given paper. A common approach for tackling this problem is to build profiles to define the scope of each venue. These profiles are then compared against the target paper. In our approach, we will study how clustering techniques can be used to construct topic-based profiles and an information retrieval-based approach be used to obtain the final recommendations. Additionally, we will explore how the use of authorship (which supplements the information) helps to improve the recommendations.

Luis M. de Campos, Juan M. Fernández-Luna, Juan F. Huete, and Luis Redondo-Expósito

Springer Science and Business Media LLC

Luis M. de Campos, Juan M. Fernández-Luna, Juan F. Huete, and Luis Redondo-Expósito

Elsevier BV

Marlon Santiago Viñán-Ludeña, Luis M. de Campos, Luis-Roberto Jacome-Galarza, and Javier Sinche-Freire

Springer Singapore

Luis M. de Campos, Andrés Cano, Javier G. Castellano, and Serafín Moral

Walter de Gruyter GmbH

Abstract Gene Regulatory Networks (GRNs) are known as the most adequate instrument to provide a clear insight and understanding of the cellular systems. One of the most successful techniques to reconstruct GRNs using gene expression data is Bayesian networks (BN) which have proven to be an ideal approach for heterogeneous data integration in the learning process. Nevertheless, the incorporation of prior knowledge has been achieved by using prior beliefs or by using networks as a starting point in the search process. In this work, the utilization of different kinds of structural restrictions within algorithms for learning BNs from gene expression data is considered. These restrictions will codify prior knowledge, in such a way that a BN should satisfy them. Therefore, one aim of this work is to make a detailed review on the use of prior knowledge and gene expression data to inferring GRNs from BNs, but the major purpose in this paper is to research whether the structural learning algorithms for BNs from expression data can achieve better outcomes exploiting this prior knowledge with the use of structural restrictions. In the experimental study, it is shown that this new way to incorporate prior knowledge leads us to achieve better reverse-engineered networks.

Eduardo Vicente-López, Luis M. de Campos, Juan M. Fernández-Luna, and Juan F. Huete

Springer Science and Business Media LLC

César Albusac, Luis M. de Campos, Juan M. Fernández-Luna, and Juan F. Huete

ACM

Nowadays it is more and more frequent that Web users search for professionals in order to find people who can help solve any problem in a given field. This is call expert finding. A particular case is when users are interested in scientific researchers. The associated problem is to get, given a query that expresses a topic of interest for a user, a set of researchers who are expert on it. One of the difficulties to tackle the problem is to indentify the topics in which a professional is expert. In this paper, we face this problem from a content-based recommendatation perspective and we present a method where, starting from the articles published by each researcher, and a query, the expert researchers are obtained. We also present a new document collection, called PMSC-UGR, specifically designed for the evaluation in the field of expert finding and document filtering

Luis M. de Campos, Juan M. Fernández-Luna, Juan F. Huete, and Luis Redondo-Expósito

Elsevier BV

Luis M. de Campos, Juan M. Fernández-Luna, and Juan F. Huete

Elsevier BV

Luis M. de Campos, Juan M. Fernández-Luna, Juan F. Huete, and Luis Redondo-Expósito

SCITEPRESS - Science and Technology Publications

César Albusac, Luis M. de Campos, Juan M. Fernández-Luna, and Juan F. Huete

Springer International Publishing

Luis M. De Campos, Juan M. Fernández-Luna, and Juan F. Huete

World Scientific Pub Co Pte Lt

One step towards breaking down barriers between citizens and politicians is to help people identify those politicians who share their concerns. This paper is set in the field of expert finding and is based on the automatic construction of politicians’ profiles from their speeches on parliamentary committees. These committee-based profiles are treated as documents and are indexed by an information retrieval system. Given a query representing a citizen’s concern, a profile ranking is then obtained. In the final step, the different results for each candidate are combined in order to obtain the final politician ranking. We explore the use of classic combination strategies for this purpose and present a new approach that improves state-of-the-art performance and which is more stable under different conditions. We also introduce a two-stage model where the identification of a broader concept (such as the committee) is used to improve the final politician ranking.

Luis M. de Campos, Juan M. Fernández-Luna, and Juan F. Huete

SAGE Publications

In the context of e-government and more specifically that of parliament, this paper tackles the problem of finding Members of Parliament (MPs) according to their profiles which have been built from their speeches in plenary or committee sessions. The paper presents a common solution for two problems: firstly, a member of the public who is concerned about a certain issue might want to know who the best MP is for dealing with their problem (recommending task); and secondly, each new piece of textual information that reaches the house must be correctly allocated to the appropriate MP according to its content (filtering task). This paper explores both these ways of searching for relevant people conceptually by encapsulating them into a single problem: that of searching for the relevant MP’s profile given an information need. Our research work proposes various profile construction methods (by selecting and weighting appropriate terms) and compares these using different retrieval models to evaluate their quality and suitability for different types of information needs in order to simulate real and common situations.

Luis M. de Campos, Juan M. Fernández-Luna, Juan F. Huete, and Luis Redondo-Expósito

Springer International Publishing

Eduardo Vicente-López, Luis M. de Campos, Juan M. Fernández-Luna, and Juan F. Huete

Elsevier BV

Luis M. de Campos, Juan M. Fernández-Luna, and Juan F. Huete

ACM

In a parliamentary setting, citizen could be interested in knowing those Members of Parliament (MPs) who are working in different areas or involved in the resolution of some people's problems. These topics of interest are usually represented by means of a profile. In this paper, the politicians' profiles are built considering the speeches in parliamentary sessions. However, in most of the cases a single profile is not the best alternative to represent MPs' interests because the specific terms related to a given topic are mixed with others, so that the MPs' preferences are diluted. The alternative is to build different subprofiles containing each one the most representative keywords for each topic, creating in this way a richer representation. We present a first approach to build subprofiles based on the MPs' speeches in different committee and plenary sessions, which will be compared, in terms of performance, to monolithic profiles for an MP content-based recommendation task.

Silvia Acid and Luis M. de Campos

Springer International Publishing

Eduardo Vicente-López, Luis M. de Campos, Juan M. Fernández-Luna, Juan F. Huete, Antonio Tagua-Jiménez, and Carmen Tur-Vigil

Springer Science and Business Media LLC